Authors

Daniel Y. Fu, Will Crichton, James Hong, Xinwei Yao, Haotian Zhang, Anh Truong, Avanika Narayan, Maneesh Agrawala, Christopher Ré, Kayvon Fatahalian

Abstract

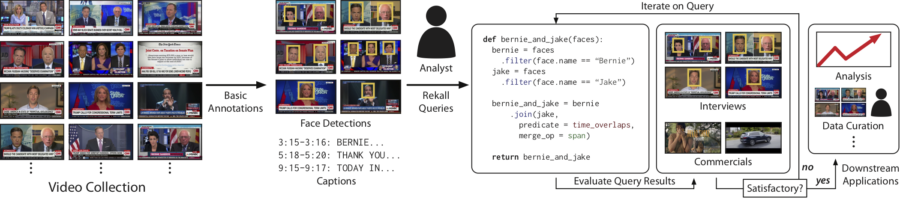

Many real-world video analysis applications require the ability to identify domain-specific events, such as interviews and commercials in TV news broadcasts, or action sequences in film. Unfortunately, pre-trained models to detect all the events of interest in video may not exist, and training new models from scratch can be costly and labor-intensive. In this paper, we explore the utility of specifying new events in video in a more traditional manner: by writing queries that compose the outputs of existing, pre-trained models. To write these queries, we have developed Rekall, a library that exposes a data model and programming model for compositional video event specification. Rekall represents video annotations from different sources (object detectors, transcripts, etc.) as spatiotemporal labels associated with continuous volumes of spacetime in a video, and provides operators for composing labels into queries that model new video events. We demonstrate the use of Rekall in analyzing video from cable TV news broadcasts and films. In these efforts, analysts were able to quickly (in a few hours to a day) author queries to detect new events. These queries were often on par with or more accurate than learned approaches (6.5 F1 points more accurate on average).

The research was published as part of the SOSP 2019 Workshop on AI Systems on 10/1/2019. The research is supported by the Brown Institute Magic Grant for the project Public Analysis of TV News.

Access the paper: https://arxiv.org/pdf/1904.11622.pdf

To contact the authors, please send a message to danfu@cs.stanford.edu