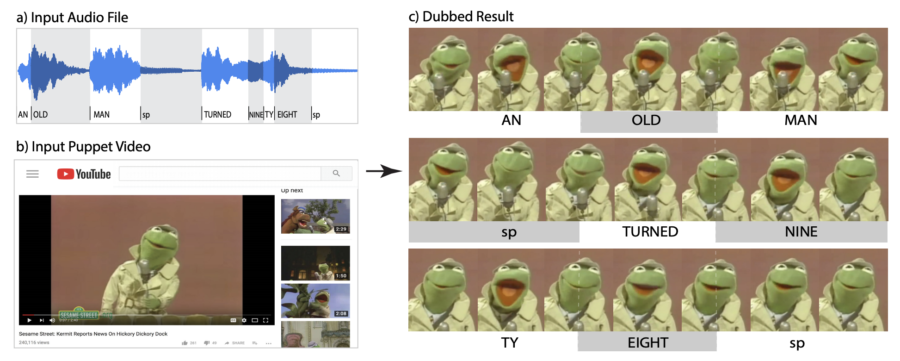

Given an audio file and a puppet video, we produce a dubbed result in which the puppet is saying the new audio phrase with proper mouth articulation. Specifically, each syllable of the input audio matches a closed-open-closed mouth sequence in our dubbed result. We present two methods, one semi-automatic appearance-based and one fully automatic audio-based, to create convincing dubs.

Authors

O. Fried and M. Agrawala

Abstract

Dubbing puppet videos to make the characters (e.g. Kermit the Frog) convincingly speak a new speech track is a popular activity with many examples of well-known puppets speaking lines from films or singing rap songs. But manually aligning puppet mouth movements to match a new speech track is tedious as each syllable of the speech must match a closed-open-closed segment of mouth movement for the dub to be convincing. In this work, we present two methods to align a new speech track with puppet video, one semi-automatic appearance-based and the other fully-automatic audio-based. The methods offer complementary advantages and disadvantages. Our appearance-based approach directly identifies closed-open-closed segments in the puppet video and is robust to low-quality audio as well as misalignments between the mouth movements and speech in the original performance, but requires some manual annotation. Our audio-based approach assumes the original performance matches a closed-open-closed mouth segment to each syllable of the original speech. It is fully automatic, robust to visual occlusions and fast puppet movements, but does not handle misalignments in the original performance. We compare the methods and show that both improve the credibility of the resulting video over simple baseline techniques, via quantitative evaluation and user ratings.

The research was published in The 30th Eurographics Symposium on Rendering (EGSR) on 7/10/2019. The research is part of Ohad Fried’s Brown Research Fellowship.

Access the paper: https://www.ohadf.com/papers/FriedAgrawala_EGSR2019.pdf

To contact the authors, please send a message to ohad@stanford.edu